High-Performance

MXM GPU Modules for

Embedded and Edge AI

Compact, Rugged, and PCIe 5.0-Ready

Engineered to meet the rigorous demands of industrial applications, ADLINK’s embedded GPU solutions leverage cutting-edge NVIDIA and Intel GPU technology to elevate graphics processing to new heights. Designed for accelerated rendering, AI inferencing, and high-performance computing, ADLINK GPUs enhance system responsiveness while minimizing I/O latency.

Understanding industrial challenges, ADLINK offers compact, power-efficient MXM GPUs for space-constrained environments and professional PCI Express graphics cards for superior graphics quality and high-performance computing. Built for durability and longevity, ADLINK’s embedded GPU solutions are designed to support the evolving needs of industries such as healthcare, manufacturing, logistics, transportation, aerospace, telecommunications, and retail - delivering reliable performance for years to come.

NVIDIA

- Blackwell

- Ada Lovelace

- Ampere

- Turing

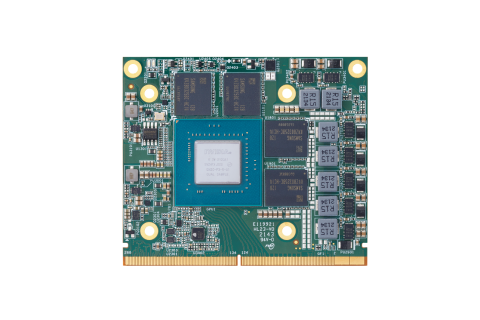

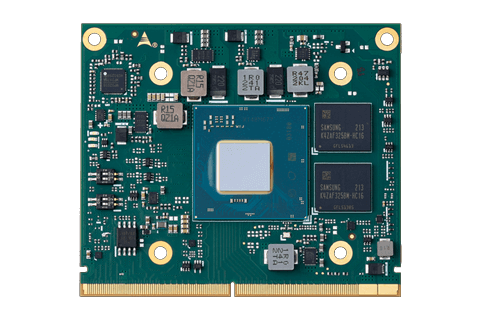

EGX-MXM-BW5000 Embedded MXM GPU Module Powered by NVIDIA® RTX PRO™ 5000 Blackwell Learn more

EGX-MXM-BW5000 Embedded MXM GPU Module Powered by NVIDIA® RTX PRO™ 5000 Blackwell Learn more  EGX-MXM-BW4000 Embedded MXM GPU Module Powered by NVIDIA® RTX PRO™ 4000 Blackwell Learn more

EGX-MXM-BW4000 Embedded MXM GPU Module Powered by NVIDIA® RTX PRO™ 4000 Blackwell Learn more  EGX-MXM-BW2000 Embedded MXM GPU Module Powered by NVIDIA® RTX PRO™ 2000 Blackwell Learn more

EGX-MXM-BW2000 Embedded MXM GPU Module Powered by NVIDIA® RTX PRO™ 2000 Blackwell Learn more  EGX-MXM-BW500 Embedded MXM GPU Module Powered by NVIDIA® RTX PRO™ 500 Blackwell Learn more

EGX-MXM-BW500 Embedded MXM GPU Module Powered by NVIDIA® RTX PRO™ 500 Blackwell Learn more

Product Features

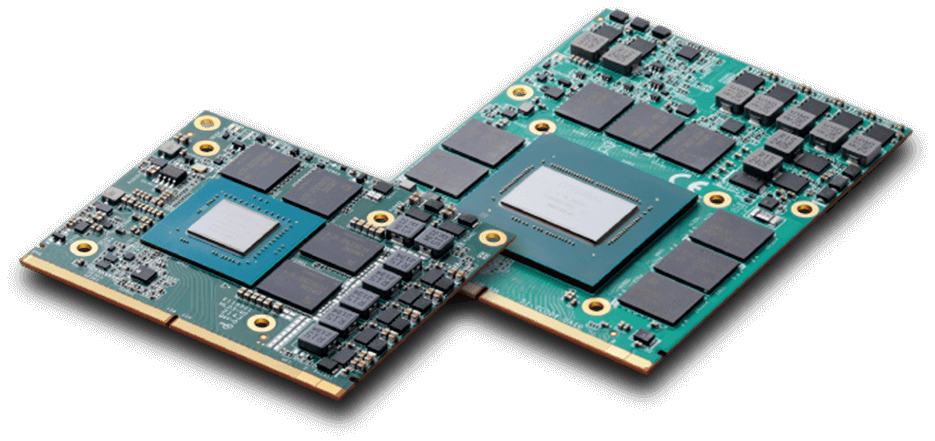

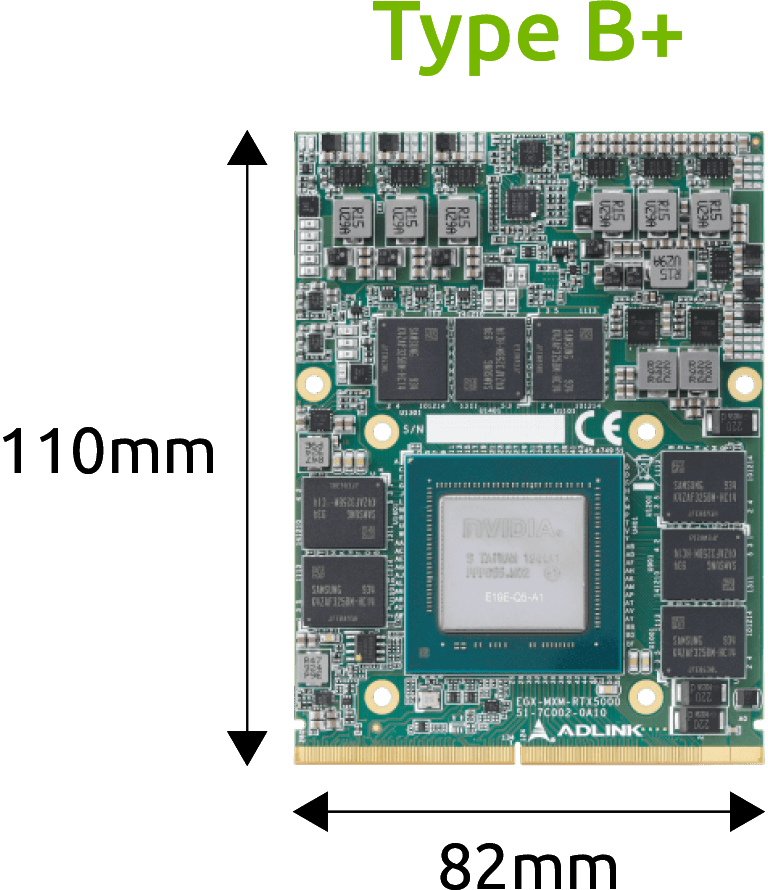

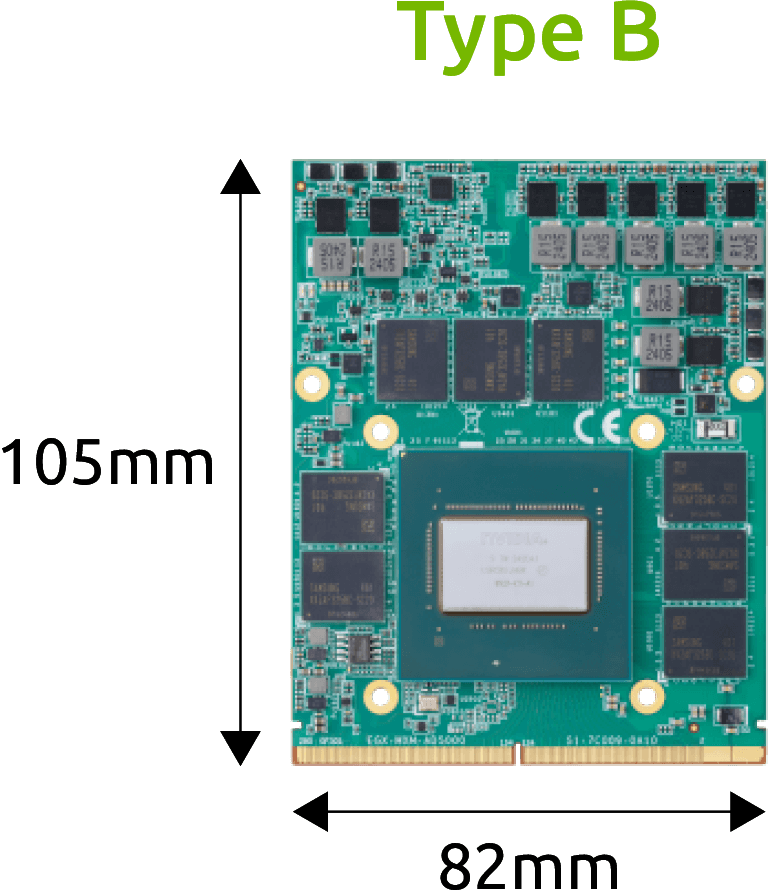

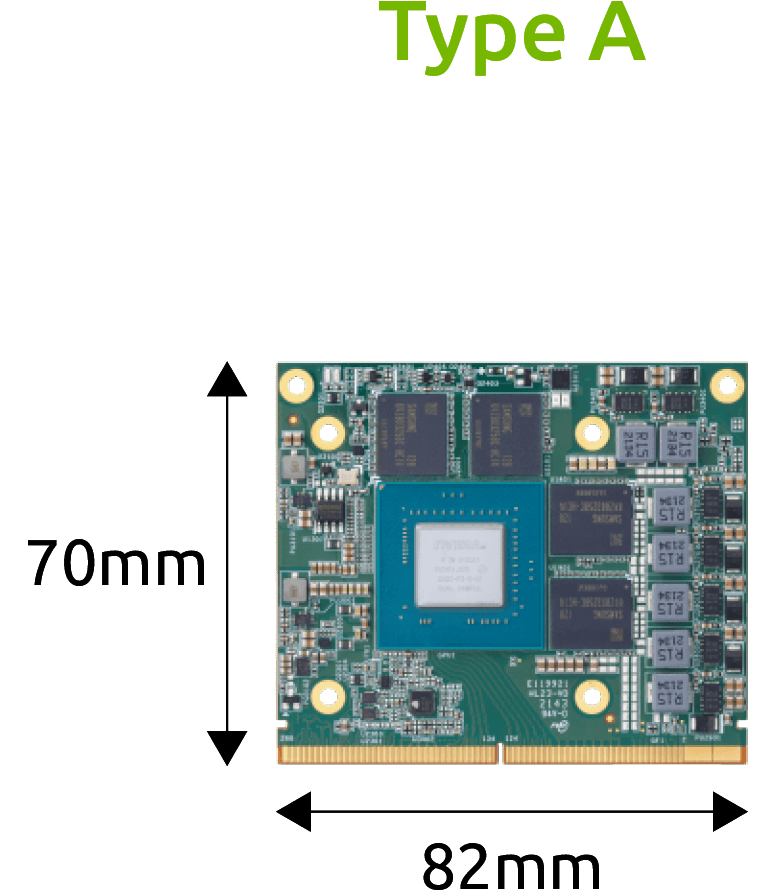

Form Factors

Why ADLINK MXM GPU Modules

Flexible Customization Tailored to your specific performance, form factor, and thermal requirements

High Performance Supports AI inferencing, machine learning, and advanced graphics processing

Extended Lifecycle Support Industrial-grade longevity ensures long-term availability and compatibility.

Real-time Technical Support ADLINK offers global and local services with 24/7 engineering support.

Major Applications

AI Computing

AI Computing Automation

Automation Autonomous Vehicles

Autonomous Vehicles Healthcare

Healthcare Smart Retail

Smart Retail